Graphics card architecture

In this section you will find an introduction to graphics card architecture. Our focus is on the basic concepts behind modern graphics cards and, along the way, we will also introduce the associated terminology. With this knowledge, you will be better equipped to find your way around the (overwhelming) number of graphics cards on the market. Finally, the focus in this section is more on real-time graphical applications.

We will start with an explanation of 3D graphics, after which we will take a closer look at the general concepts involved in high-end graphics cards.

3D graphics

The world we live in is three dimensional, so in 3D graphics we try to preserve this third dimension as much as possible. With computer graphics, this is done by modeling in three dimensions, with these models being built from meshes and textures. A mesh is a collection of 'corner points' (we call them vertices), lines and faces (called polygonals) which, more or less (depending on the size and number of the 'mazes'), resemble real-life objects. To add realism, each surface of the faces is given some texture, which is nothing more than an image (showing a texture).

So, by now we have a representation of an object in 3D. By adding more objects we can build an entire scene. And by letting the objects move we realize a 3D animation. In the relevant software, the 3D models are represented by so-called vectors, and all calculations (for example to implement the movement of a 3D object) are conducted with vector calculus.

However, computer displays can only output two dimensional images. These images are built from pixels which are ordered in a raster. Such a 2D raster image is something else entirely to the 3D vector based model!

So, an important task of the graphics card is to translate 3D models into 2D rastered images, which can be output to a display. This process is called 3D rendering. Note also that when we use a 3D display, we still have to run this rendering process because all 3D displays use 2D raster images that are taken from two different viewpoints (analogous to how our brain makes a 3D image out of two 2D views from our eyes).

Now it’s time to look at graphics cards and their GPU (Graphical Processor Unit), which is the unit doing all the hard rendering work.

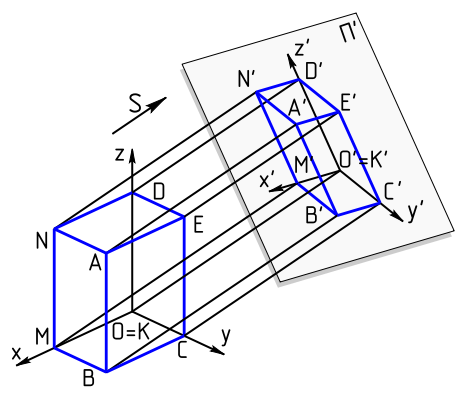

3D projection (source: Wikipedia)

|

The GPU

In order to understand the architecture of a GPU, we will look at the three cornerstones of its design.

The GPU is also some kind of CPU

Don't be fooled. GPUs use common CPU concepts. The simplest GPU model is essentially a CPU with lots of cores, meaning that you will again encounter concepts like ALUs (as they use lots of them), L1 and L2 caches, instructions and data, pipelined CPU designs, and threads. So, if you want to understand GPUs you should master CPU designs first. If you have already done this, you will already know a lot about the GPU.

The graphics pipeline

The concept of the graphics pipeline is what really sets it apart from general CPUs, although the idea of a pipeline is the same as that used by general purpose CPUs.

The graphics pipeline is built in stages. Every stage is specialized in precisely one element of the rendering process. Once we are familiar with these tasks, we will be able to recognize them in the designs of the GPU. Let’s now take a look at the separate stages (we will deliberately take a simplified approach here).

Transformation

Pipelines begin with the intake of a model in the vector format. It will then translate, rotate and scale the model as requested by the application software (for example, a game).

Per vertex lighting

Each vertex ('corner point' in the model) is lit according to defined light sources. Values between the vertices are interpolated.

Viewing transformation

Then, the observer's viewpoint (or virtual camera position) is taken into account and the model is transformed from its world coordinate system to the observer's one.

Projection transformation

Again, the model is transformed, now putting it into perspective. Objects that are farther away become smaller.

Clipping

This is a straight forward step. Everything that can't be displayed is simply cut away. This is done to prevent calculations that would be useless because their results would never be seen.

Rasterization

Here we are going to turn the vector based 3D model into a 2D raster image. This is done by projecting the 3D model onto a 2D surface. This task involves matrix calculus and is executed by dedicated circuits in the graphics card. Every pixel must also get a color, which is achieved by per pixel shaders.

A shader is a small software program that (in this case) calculates the color of a pixel.

Texture & fragment shading

Finally, faces are filled in with their assigned texture by rotating and scaling them appropriately. This is also carried out by a separate stage in the grahics pipeline.

Now that we are familiar with what is executed in the graphics pipeline, the design of a graphics card becomes much clearer. The graphics pipeline cannot, however, be one to one pinpoint accurate in its designs. This is because the first stages are mainly conducted under program control, such as with an ordinary, general CPU. Most designs have some kind of specialized circuits which support the different stages in the pipeline. However, they differ between designs. Texture units are separate circuits in the GPU design.

Parallel architecture: cores and threads

The cornerstone of every GPU design is its support for massive parallel computing. This is also necessary because of the many objects that need to be processed. Fortunately, 3D models are largely built from independent objects. This makes them well suited for parallel execution.

The way the GPU handles this parallelism is by implementing many cores (NVidia calls them CUDAs, each containing up to four ALUs, while AMD talks about Stream Processing Units). The software uses all of these cores (we are talking about hundreds of them) by running threads. The large numbers of cores are the most visible design pattern when looking at a GPU design. You will also find some mechanisms in the design that are responsible for an efficient way of allocating all of these threads (thousands) to the available cores. The goal here is to use all of the cores and other resources as much as possible.

APIs

The Application Program Interface is the interface that software must use to get at the GPU's functionality. There are two APIs commonly in use: OpenGL and Direct-X. Both interfaces hide all of the GPU's technical details from the program. Instead, they expose a functional model of the graphics pipeline as discussed earlier.

Recent developments

There are two recent developments worth noting. The first is the change in architecture from a specialized graphics engine to a more general parallel computing platform. The second is the incorporation of functionality other than simply graphics oriented roles, like the support for physical models (collision, fluids, etc.).

Comments are disabled.